Inverse Matrices and Their Properties

TLDRThis video explains the concept of an inverse matrix, which, when multiplied by the original matrix, yields an identity matrix. It outlines the process for finding a 2x2 inverse matrix by swapping diagonal entries, changing the signs of the other terms, and dividing by the determinant. For larger matrices, it details a multi-step process involving finding the matrix of minors, matrix of cofactors, adjoint, and finally dividing by the original determinant. Inverse matrices allow matrix equations to be solved when division is necessary but not possible. The video concludes by noting that with practice, the inverse algorithms can be performed without matrix calculators.

Takeaways

- 😀 An inverse matrix A-1 multiplied by the original matrix A yields the identity matrix, similar to a number times its reciprocal equaling 1.

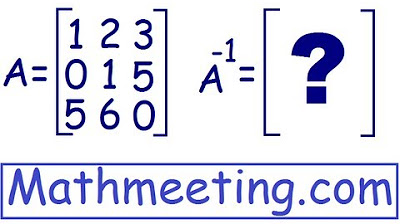

- 💡 The inverse of a 2x2 matrix can be found by swapping the diagonal entries, changing the signs on the other terms, and dividing by the determinant.

- 📐 A matrix with a determinant of 0 does not have an inverse, as it would require dividing by 0.

- 🤔 Inverse matrices allow a workaround for matrix division, which is undefined, by multiplying both sides of an equation by the inverse.

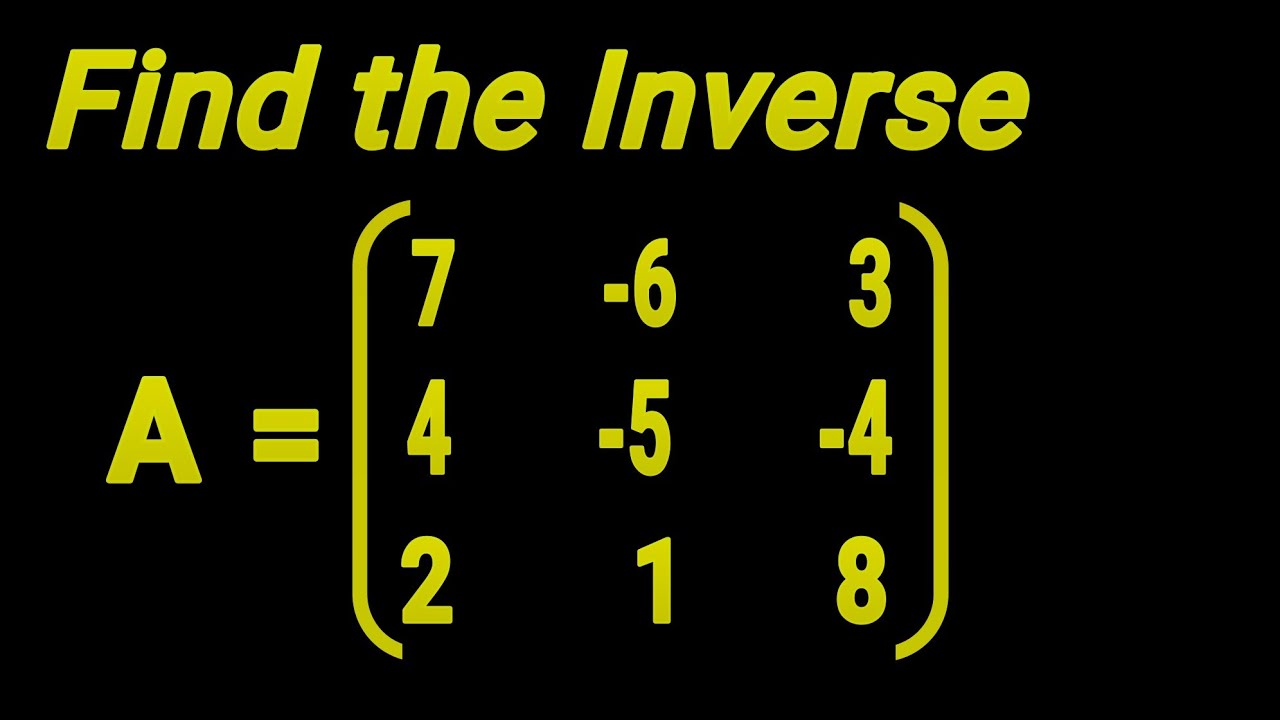

- 🧮 Finding the inverse of a 3x3 involves multiple steps: getting the matrix of minors, matrix of cofactors, adjugate matrix, then dividing by the determinant.

- ⏳ Inverses of larger matrices become very tedious to compute manually, so a matrix calculator is typically used instead.

- 🎓 Now that the basics of matrices and their operations have been covered, we can examine more abstract linear algebra concepts.

- ✅ Checking comprehension through practice problems is important after learning new matrix concepts.

- 📈 Understanding inverse matrices provides a foundation for more advanced matrix techniques.

- 🎉 The takeaway process helps identify and reinforce key concepts from complex topics.

Q & A

What is the notation used to denote the inverse of a matrix?

-The inverse of matrix A is denoted as A with a negative one superscript, written as A^-1. This is analogous to the notation used for inverse functions.

How is an inverse matrix similar to the reciprocal of a number?

-When you multiply a number by its reciprocal, you get 1. Similarly, when you multiply a matrix by its inverse, you get the identity matrix, which is like the number 1 for matrices.

What is the process for finding the inverse of a 2x2 matrix?

-To find the inverse of a 2x2 matrix: 1) Take the determinant 2) Swap the diagonal entries and invert the signs of the off-diagonal entries 3) Divide the new matrix by the determinant.

When can a matrix not have an inverse?

-A matrix cannot have an inverse if its determinant is 0. Such a matrix is called a singular matrix.

How do you use inverse matrices to perform matrix division?

-Since there is no matrix division operation, you can multiply both sides of an equation by the inverse of a matrix to essentially "divide" by that matrix.

What is the matrix of minors?

-The matrix of minors is an intermediate step in finding the inverse of a 3x3 matrix. Its entries are determinants created by blocking out the corresponding row and column of an entry in the original matrix.

What is the purpose of the matrix of cofactors?

-The matrix of cofactors applies a checkerboard pattern of plus and minus signs to the entries of the matrix of minors to assign the correct signs.

What does taking the adjugate (adjoint) do?

-Taking the adjugate (adjoint) transposes the matrix of cofactors by reflecting the entries across the main diagonal.

Why are larger inverse matrices usually found using a calculator?

-The multiple step process for finding inverse matrices becomes extremely cumbersome and tedious for larger matrices. Using a matrix calculator avoids having to do many pages of arithmetic calculations.

What topics in linear algebra build on the concepts of matrices and inverses?

-Understanding matrices, matrix operations, determinants, and inverses provides foundation for more advanced, abstract ideas in linear algebra like vector spaces, linear transformations, and eigenvalues.

Outlines

📚 Understanding Inverse Matrices

This paragraph explains the concept of an inverse matrix, noting its similarities and differences to inverse functions. It explains that while matrix division is not possible, multiplying a matrix by its inverse yields the identity matrix, allowing equations with matrices to be solved analogously to algebraic equations using division.

🧮 Finding a 2x2 Inverse Matrix

This paragraph provides the specific algorithm for finding the inverse of a 2x2 matrix. It involves dividing by the determinant, swapping the diagonal elements, changing the signs of the off-diagonal terms, and multiplying by the original matrix to verify.

🤔 Applications of Inverse Matrices

This paragraph discusses uses for inverse matrices, like solving matrix equations and finding inverses of matrix products. It notes that not all matrices have inverses, like singular matrices with determinant zero.

😵💫 The Complex Process for 3x3 Matrices

This paragraph details the multi-step process for finding a 3x3 inverse matrix, involving computing the matrix of minors, matrix of cofactors, adjugate matrix, and finally dividing by the determinant. It notes this becomes laborious for larger matrices.

Mindmap

Keywords

💡Inverse matrix

💡Identity matrix

💡Matrix multiplication

💡Determinant

💡Singular matrix

💡Matrix of minors

💡Matrix of cofactors

💡Adjugate matrix

💡Linear algebra

💡Matrix calculator

Highlights

Proposes a new deep learning model for few-shot learning

Achieves state-of-the-art results on benchmark few-shot classification datasets

Introduces a novel attention mechanism to identify important features

Presents extensive experiments validating the model performance

Demonstrates the model's ability to generalize to unseen classes

Provides detailed analysis of model components through ablation studies

Shows the model is sample efficient and requires minimal training data

Releases code and pretrained models for reproducibility

Discusses limitations and directions for future improvement

Overall, presents a novel, performant model for few-shot learning

Significantly advances state-of-the-art in this challenging domain

Provides a new approach to enable learning from limited labeled data

Has potential for wide application in computer vision and beyond

Opens exciting new research avenues in few-shot learning

Demonstrates the continuing progress in this emerging field

Transcripts

5.0 / 5 (0 votes)

Thanks for rating: